|

Jianyu Chen I am an Assistant Professor in the Institute for Interdisciplinary Information Sciences (IIIS) at Tsinghua University. Prior to joining IIIS, I worked with Prof. Masayoshi Tomizuka at the University of California, Berkeley and received my Ph.D. degree in 2020. I received my bachelor's degree from Tsinghua University in 2015. My research interests include embodied AI, robotics, reinforcement learning, and control. I lead the Intelligent Systems and Robotics Laboratory (ISR Lab), where we do cutting-edge researches about VLA, WAM and RL. I also founded RobotEra, a unicorn robotics startup aming at developing generalist robots. Please contact me at jianyuchen@tsinghua.edu.cn.

Jianyu Chen, assistant professor at the Institute for Interdisciplinary Information Sciences of Tsinghua University, and founder of RobotEra. He obtained his bachelor's degree from Tsinghua University and his doctorate degree from the University of California, Berkeley. In recent years, he has been engaged in cutting-edge research and industrialization exploration in the interdisciplinary field of robotics and artificial intelligence. His goal is to build general-purpose humanoid robots with high performance and high intelligence. He has published more than 70 papers in international top conferences and journals in the fields of robotics and artificial intelligence. Some of his papers have been shortlisted for the best paper awards of international conferences such as RSS 2024, L4DC 2022, IEEE IV 2021, and IFAC MECC 2021. He has been included in the list of "Forbes China 30 Under 30", the list of "Hurun U35 Chinese Entrepreneurial Pioneers", the list of "36Kr 36 Under 36", and the list of "Top 30 Influential Figures in Artificial Intelligence" by Qbit. |

|

Selected Research

|

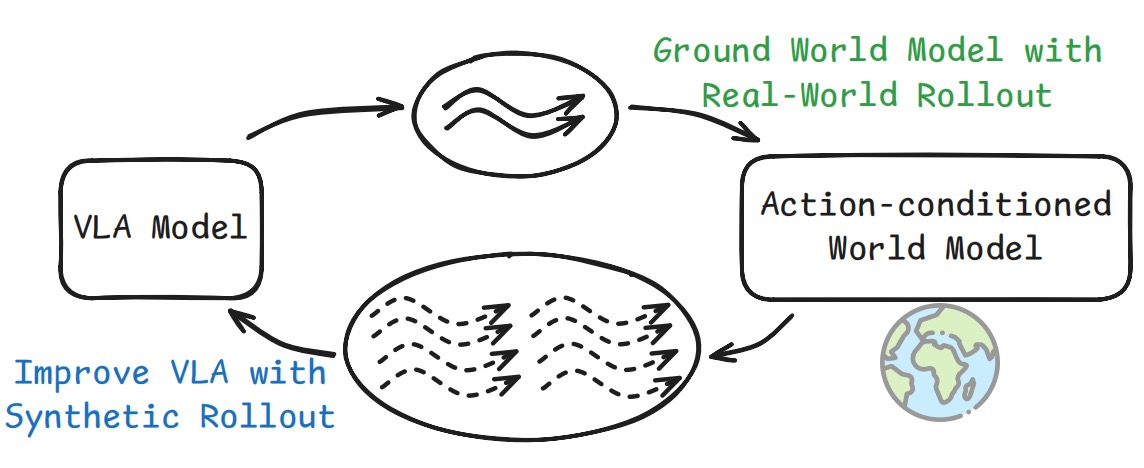

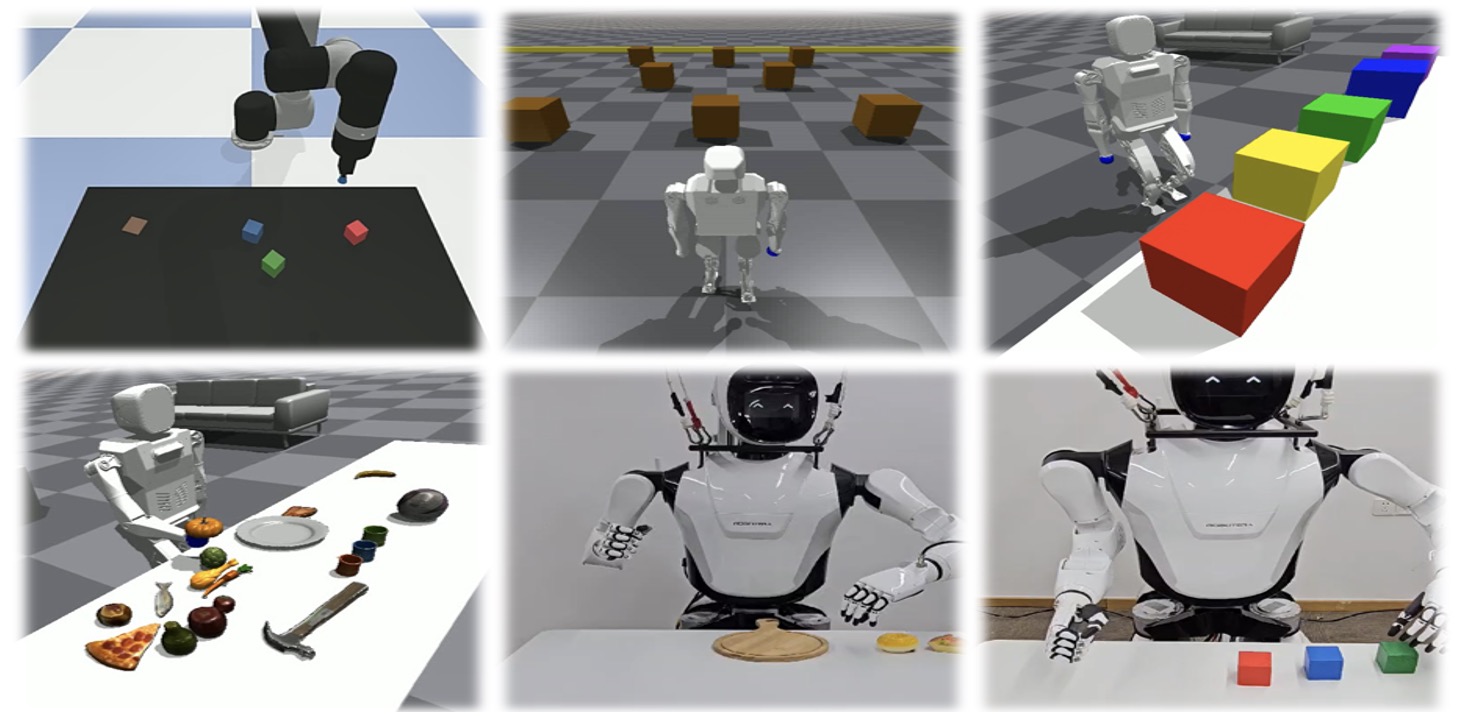

VLAW: Iterative Co-Improvement of Vision-Language-Action Policy and World Model

Yanjiang Guo*, Tony Lee*, Lucy Xiaoyang Shi*, Jianyu Chen, Percy Liang, Chelsea Finn Arxiv, 2026 project page / arXiv We iteratively improve VLA and action-conditioned world model. |

|

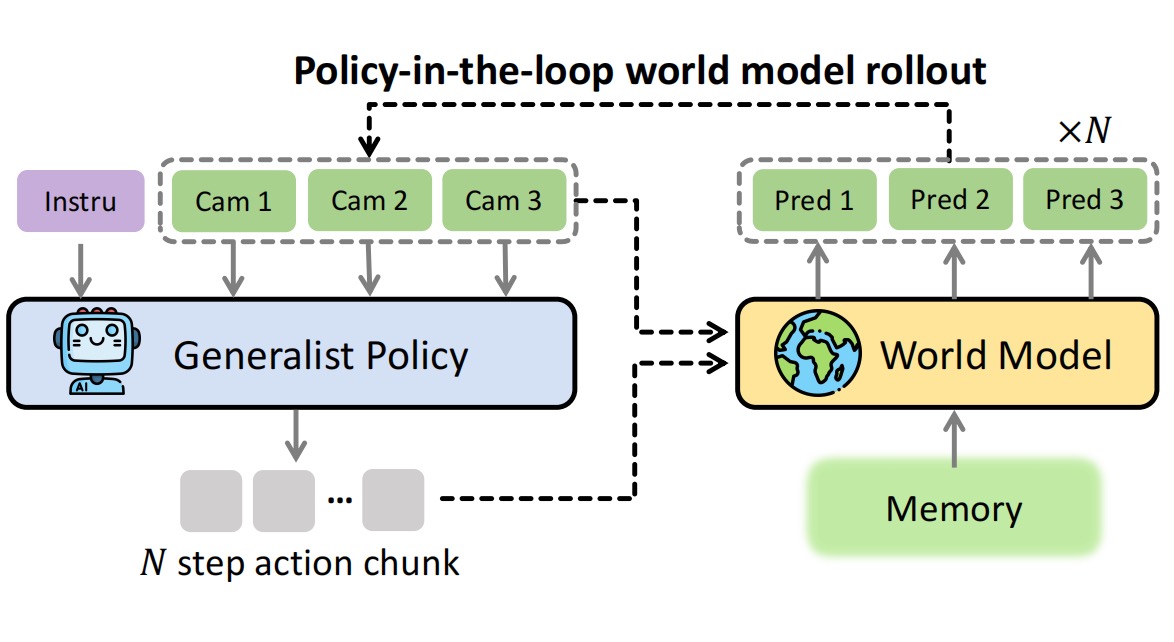

Ctrl-World: A Controllable Generative World Model for Robot Manipulation

Yanjiang Guo*, Lucy Xiaoyang Shi*, Jianyu Chen, Chelsea Finn International Conference on Learning Representations (ICLR), 2026 project page / arXiv We train a controllable generative world model that can be used to evaluate and improve generalist robot policy. |

|

Video Prediction Policy: A Generalist Robot Policy with Predictive Visual Representations

Yucheng Hu*, Yanjiang Guo*, Pengchao Wang, Xiaoyu Chen, Yen-Jen Wang, Jianke Zhang, Koushil Sreenath, Chaochao Lu, Jianyu Chen International Conference on Machine Learning (ICML), 2025 (Spotlight) project page / code / arXiv We fine-tune a video diffusion foundation model to guide manipulation policy learning. |

|

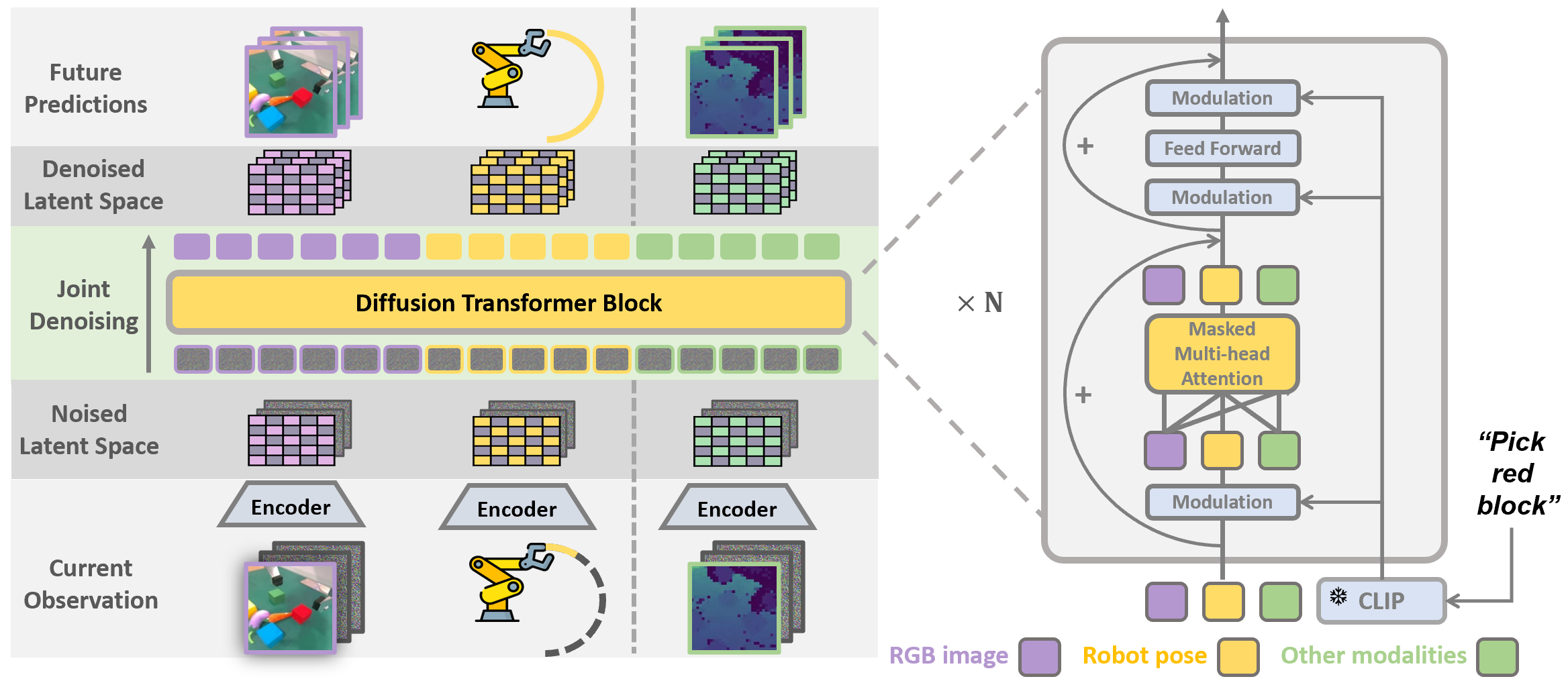

Prediction with Action: Visual Policy Learning via Joint Denoising Process

Yanjiang Guo*, Yucheng Hu*, Jianke Zhang, Yen-Jen Wang, Xiaoyu Chen, Chaochao Lu#, Jianyu Chen# Advances in Neural Information Processing Systems (NeurIPS), 2024 project page / code / arXiv We jointly predict future images and robot actions in a unified denoising network. |

|

BagelVLA: Enhancing Long-Horizon Manipulation via Interleaved Vision-Language-Action Generation

Yucheng Hu*, Jianke Zhang*, Yuanfei Luo*, Yanjiang Guo, Xiaoyu Chen, Xinshu Sun, Kun Feng, Qingzhou Lu, Sheng Chen, Yangang Zhang, Wei Li, Jianyu Chen Arxiv, 2026 arXiv We unify linguistic planning, visual forecasting, and action generation for long-horizon manipulation. |

|

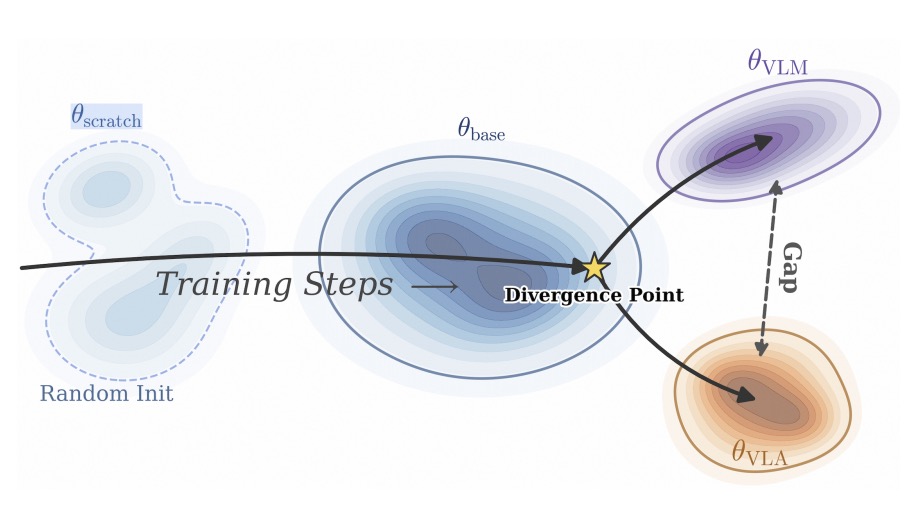

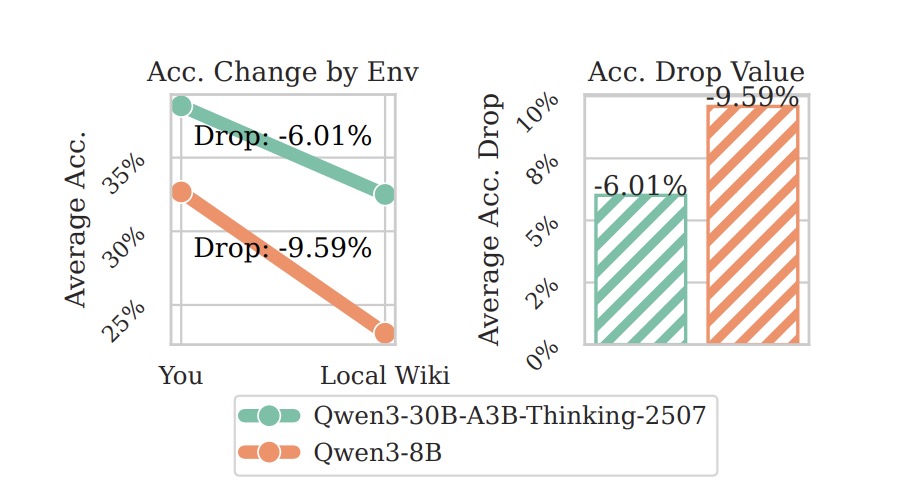

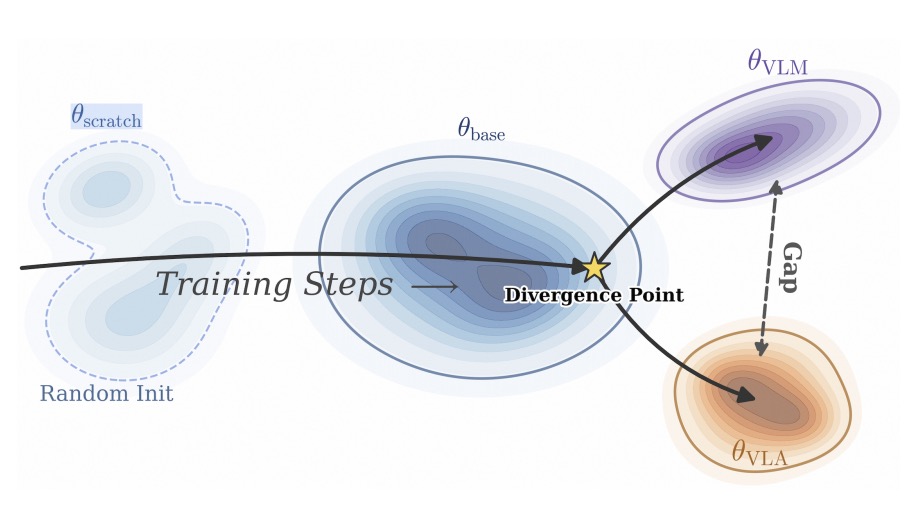

VLM4VLA: Revisiting Vision-Language-Models in Vision-Language-Action Models

Jianke Zhang, Xiaoyu Chen, Yanjiang Guo, Yucheng Hu, Jianyu Chen International Conference on Learning Representations (ICLR), 2026 arXiv / code We revisit the role of pretrained VLM backbones in VLA performance. |

|

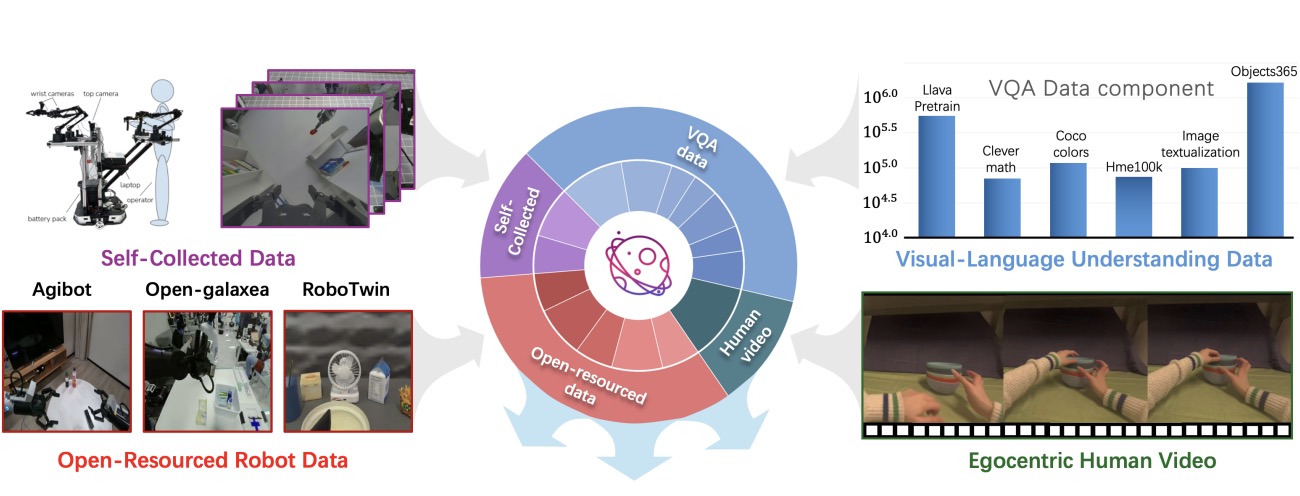

Villa-x: Enhancing Latent Action Modeling in Vision-Language-Action Models

Xiaoyu Chen*, Hangxing Wei*, Pushi Zhang*, Chuheng Zhang*, Kaixin Wang*, Yanjiang Guo, Rushuai Yang, Yucen Wang, Xinquan Xiao, Li Zhao, Jianyu Chen, Jiang Bian International Conference on Learning Representations (ICLR), 2026 project page / arXiv We incorporate latent action representations into VLA for stronger generalization. |

|

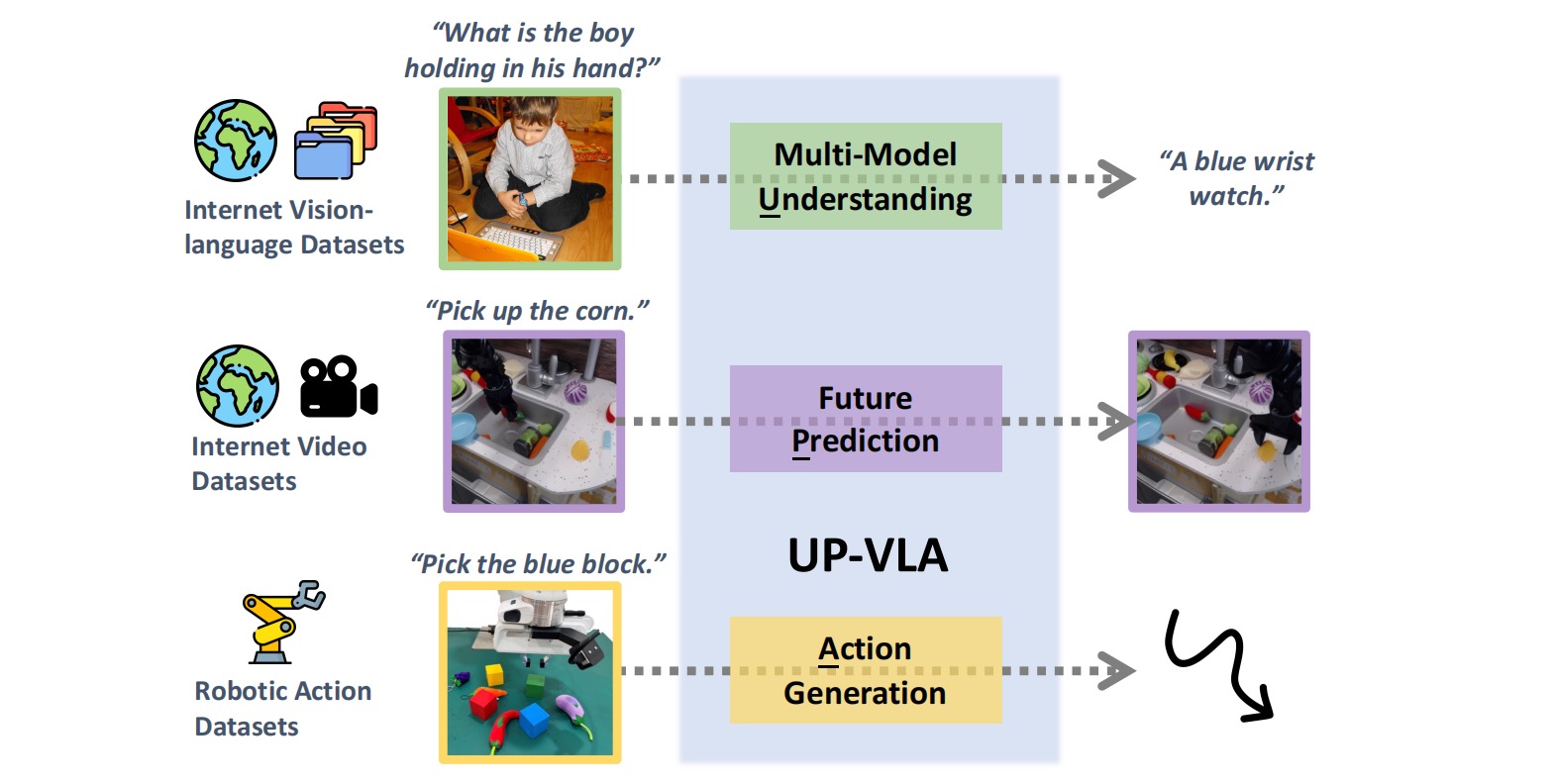

UP-VLA: A Unified Understanding and Prediction Model for Embodied Agent

Jianke Zhang*, Yanjiang Guo*, Yucheng Hu, Xiaoyu Chen, Jianyu Chen International Conference on Machine Learning (ICML), 2025 arXiv / code We unify multimodal understanding and future prediction in a single VLA model. |

|

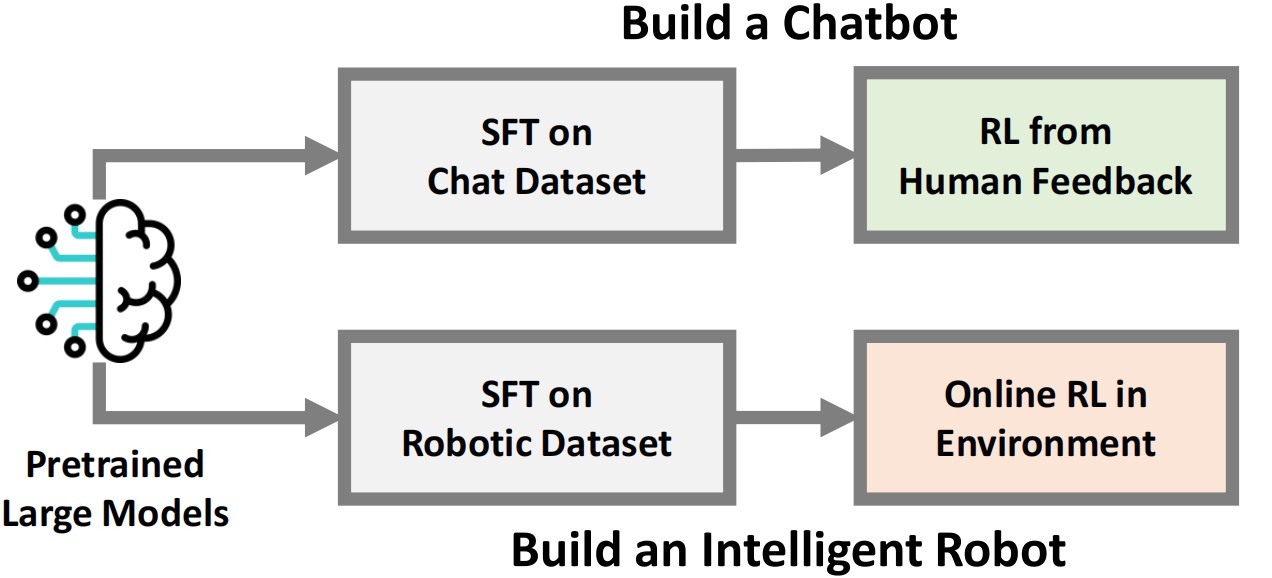

Improving Vision-Language-Action Model with Online Reinforcement Learning

Yanjiang Guo*, Jianke Zhang*, Xiaoyu Chen*, Xiang Ji, Yen-Jen Wang, Yucheng Hu, Jianyu Chen International Conference on Robotics and Automation (ICRA), 2025 arXiv We explore stable online RL for large VLA models. |

|

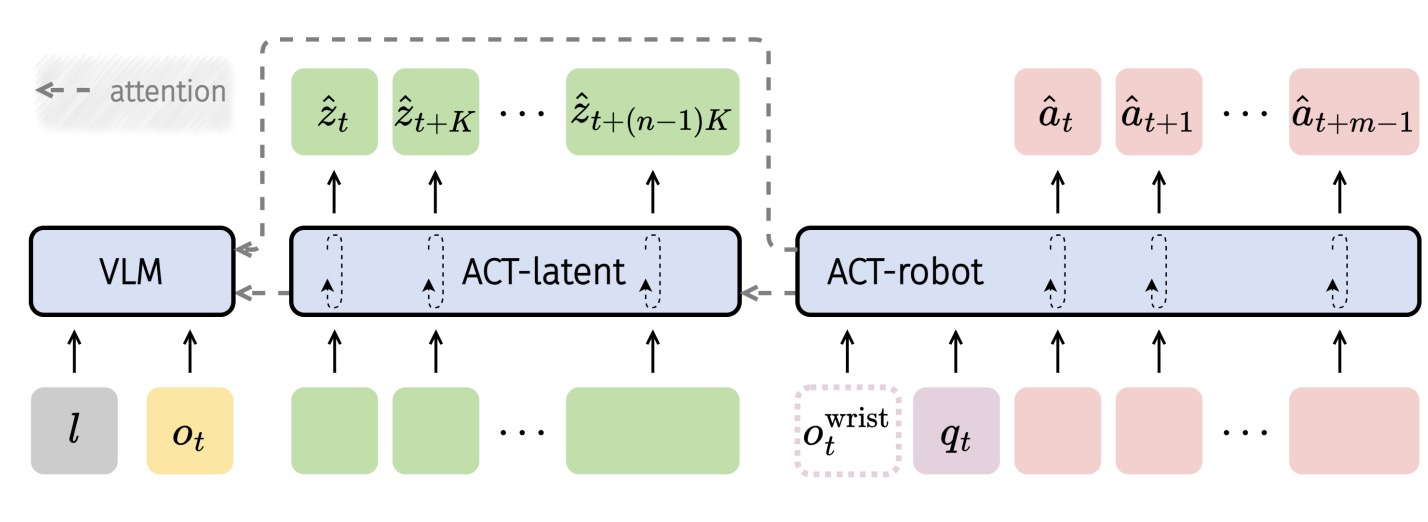

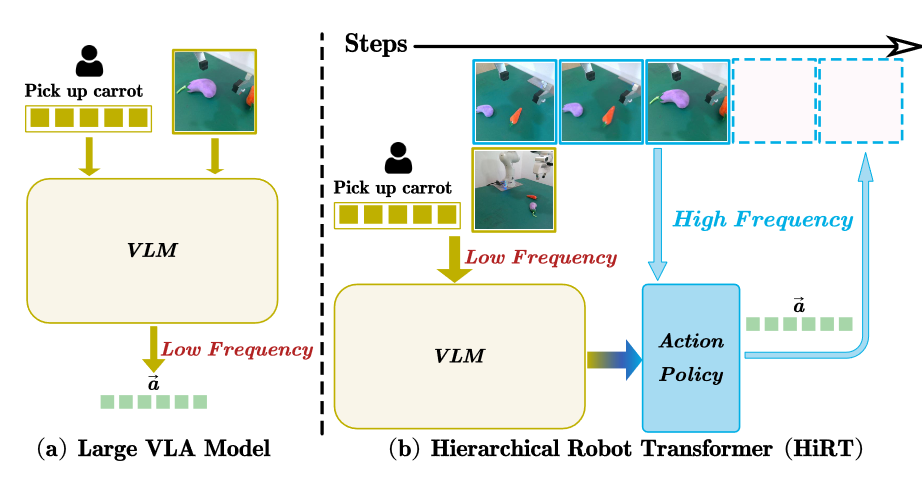

HiRT: Enhancing Robotic Control with Hierarchical Robot Transformers

Jianke Zhang*, Yanjiang Guo*, Xiaoyu Chen, Yen-Jen Wang, Yucheng Hu, Chengming Shi, Jianyu Chen Conference on Robot Learning (CoRL), 2024 arXiv We couple pretrained VLM understanding with hierarchical control for robot policy. |

|

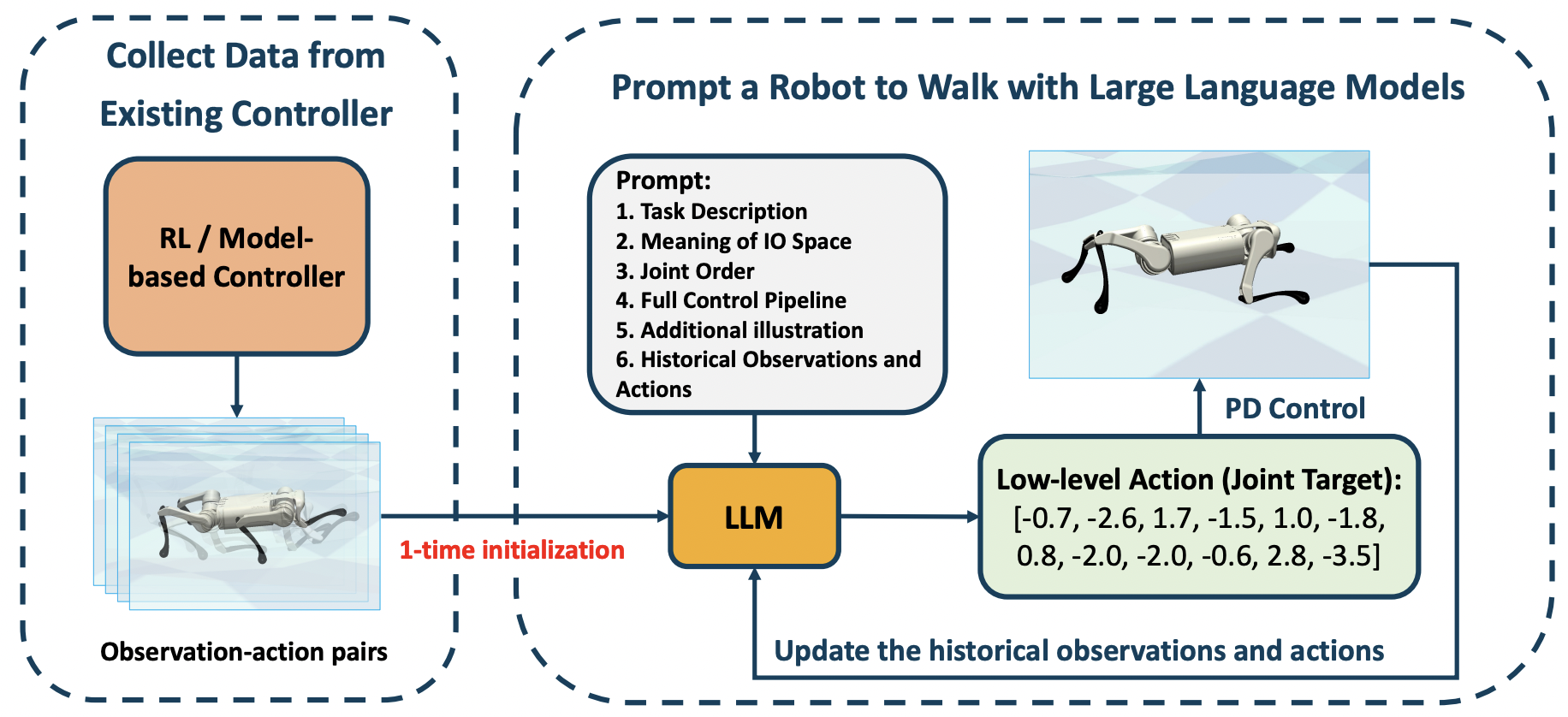

Prompt a Robot to Walk with Large Language Models

Yen-Jen Wang, Bike Zhang, Jianyu Chen, Koushil Sreenath IEEE Conference on Decision and Control (CDC), 2024 arXiv We explore LLM-based low-level feedback control for locomotion. |

|

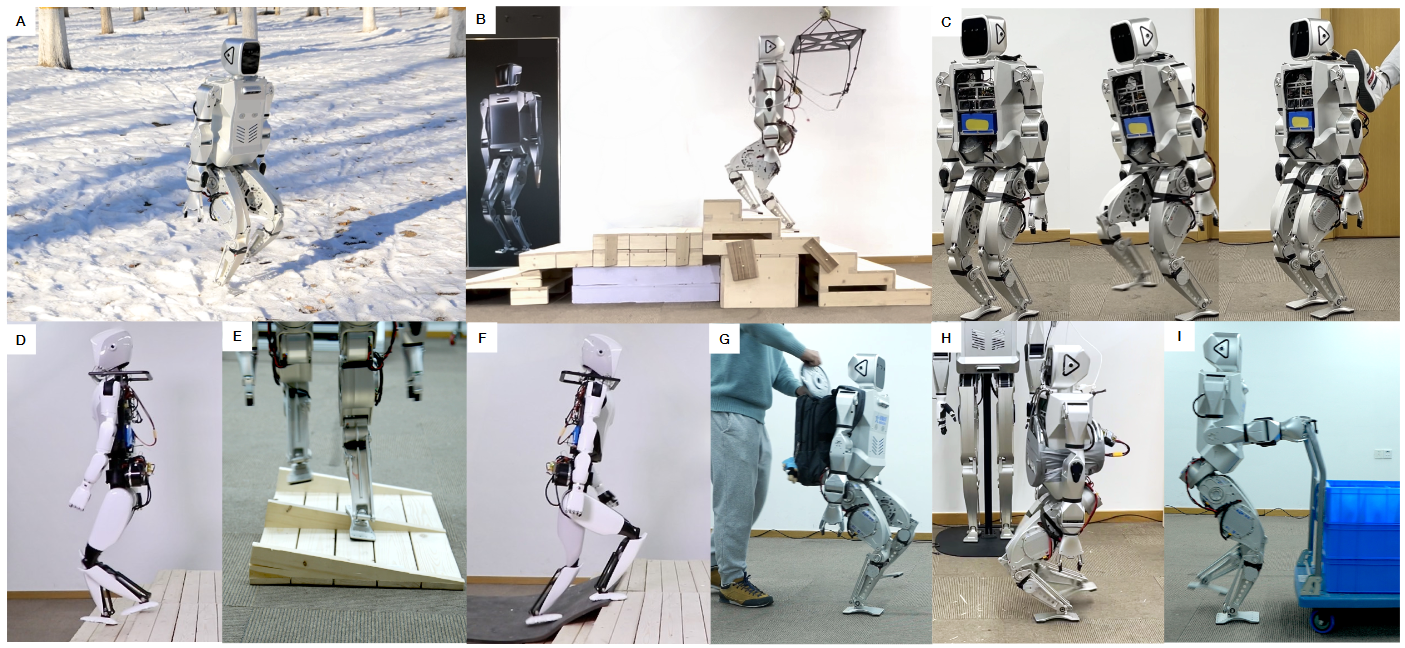

Advancing Humanoid Locomotion: Mastering Challenging Terrains with Denoising World Model Learning

Xinyang Gu*, Yen-Jen Wang*, Xiang Zhu*, Chengming Shi*, Yanjiang Guo, Yichen Liu, Jianyu Chen Robotics: Science and Systems (RSS), 2024 (Best Paper Award Finalists) project page / arXiv We train a humanoid robot to master challenging terrain with zero-shot sim-to-real transfer. |

|

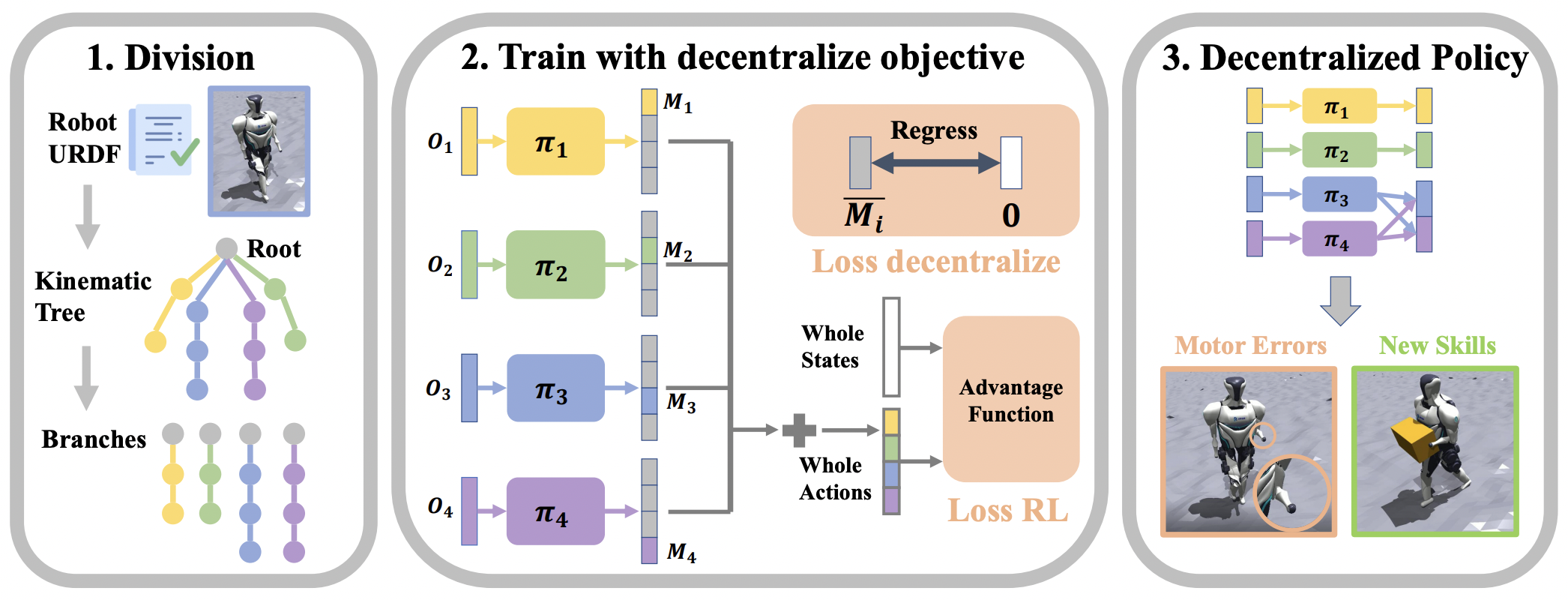

Decentralized Motor Skill Learning for Complex Robotic Systems

Yanjiang Guo, Zheyuan Jiang, Yen-Jen Wang, Jingyue Gao, Jianyu Chen IEEE Robotics and Automation Letters (RA-L), 2023 arXiv We discover decentralized motor groups for robust control of complex robotic systems. |

|

ProCeedRL: Process Critic with Exploratory Demonstration Reinforcement Learning for LLM Agentic Reasoning

Jingyue Gao, Yanjiang Guo, Xiaoshuai Chen, Jianyu Chen Annual Meeting of the Association for Computational Linguistics (ACL), 2026 arXiv Guided RL for general agentic reasoning in language models. |

|

MARGE: Improving Math Reasoning for LLMs with Guided Exploration

Jingyue Gao, Runji Lin, Keming Lu, Bowen Yu, Junyang Lin, Jianyu Chen International Conference on Machine Learning (ICML), 2025 arXiv Guided exploration improves LLM math reasoning on harder problems. |

|

DoReMi: Grounding Language Model by Detecting and Recovering from Plan-Execution Misalignment

Yanjiang Guo*, Yen-Jen Wang*, Lihan Zha*, Jianyu Chen International Conference on Intelligent Robots and Systems (IROS), 2024 project page / arXiv We monitor execution and recover from plan-execution misalignment for LLM-guided robot control. |

|

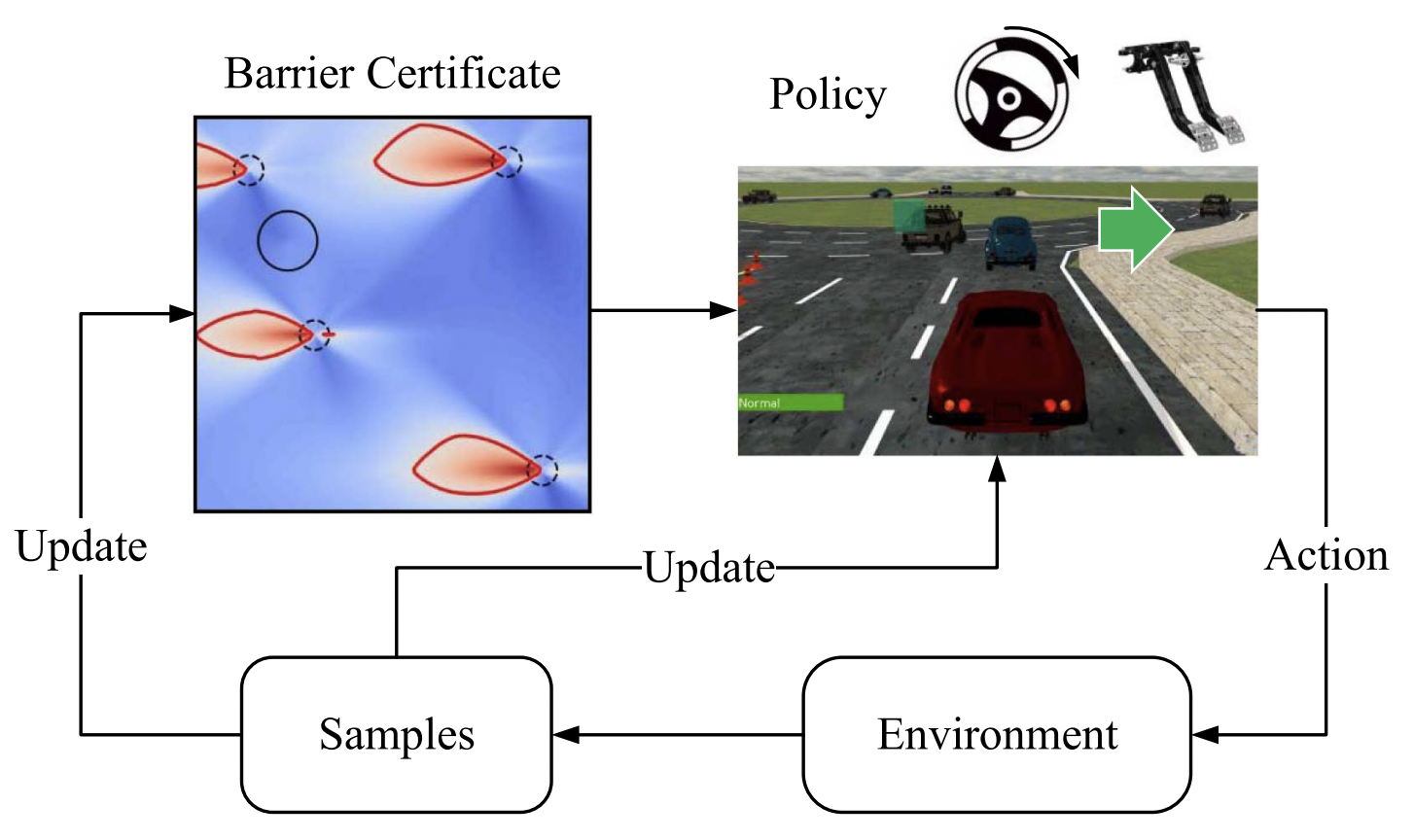

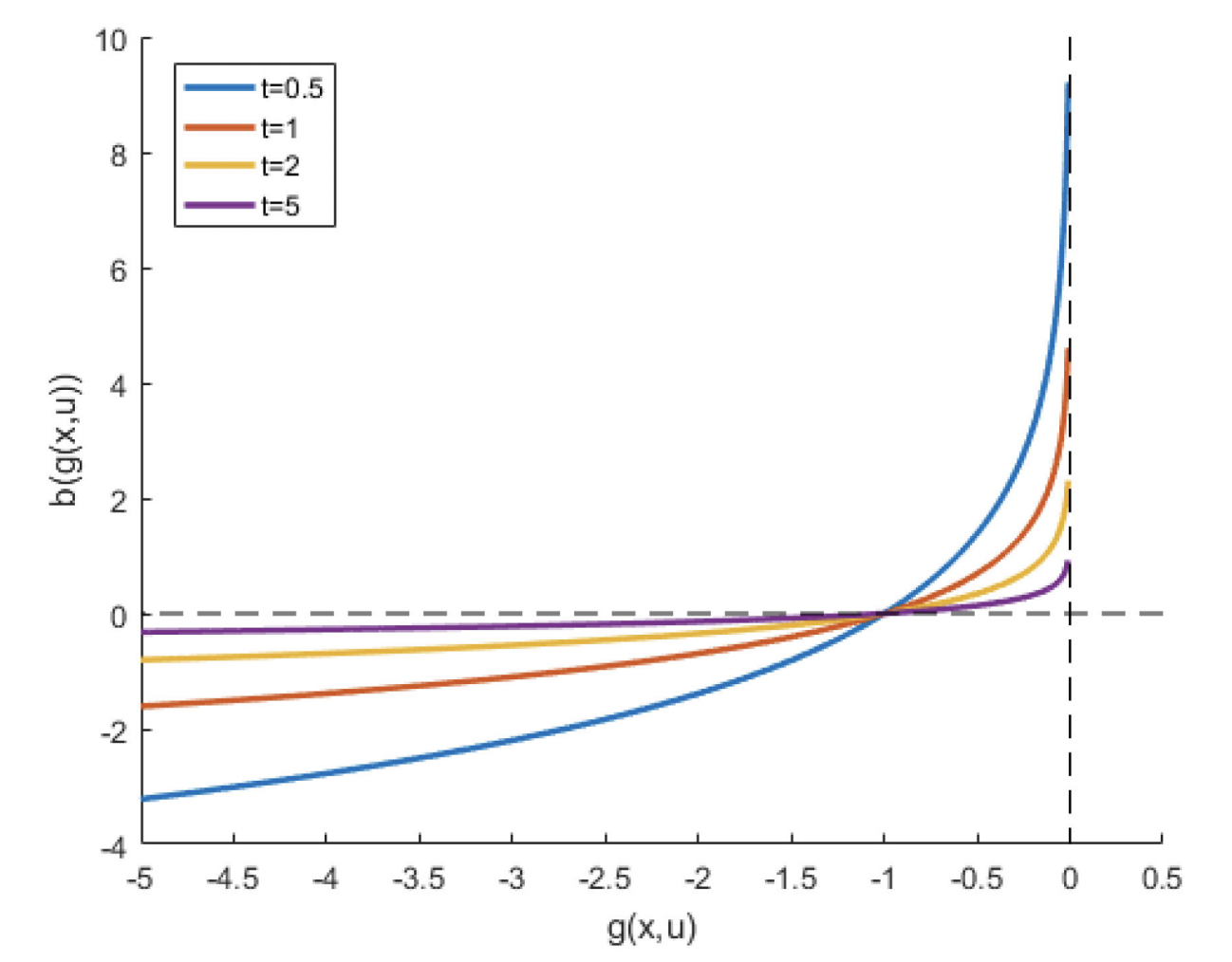

Model-Free Safe Reinforcement Learning through Neural Barrier Certificate

Yuxuan Jiang, Yichen Liu, Jianyu Chen, Shengbo Eben Li IEEE Robotics and Automation Letters (RAL), 2023 We propose a neural barrier certificate approach for model-free safe RL. |

|

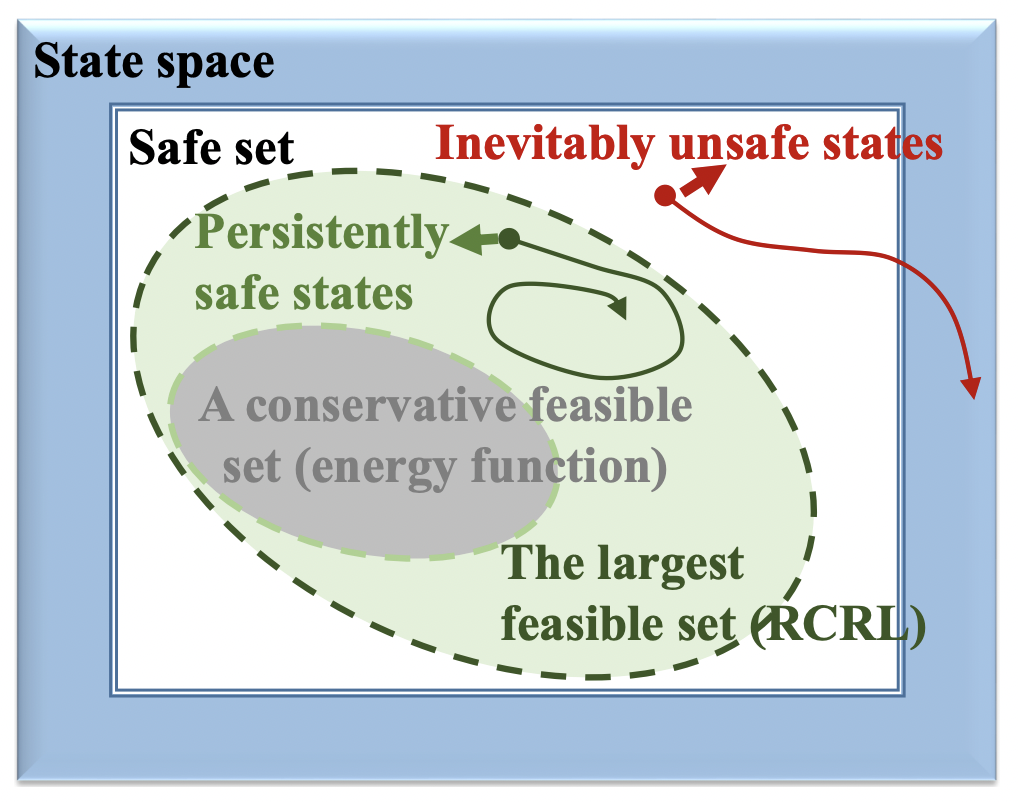

Reachability Constrained Reinforcement Learning

Dongjie Yu, Haitong Ma, Shengbo Eben Li, Jianyu Chen International Conference on Machine Learning (ICML), 2022 We use reachability constraints to improve safety in RL. |

|

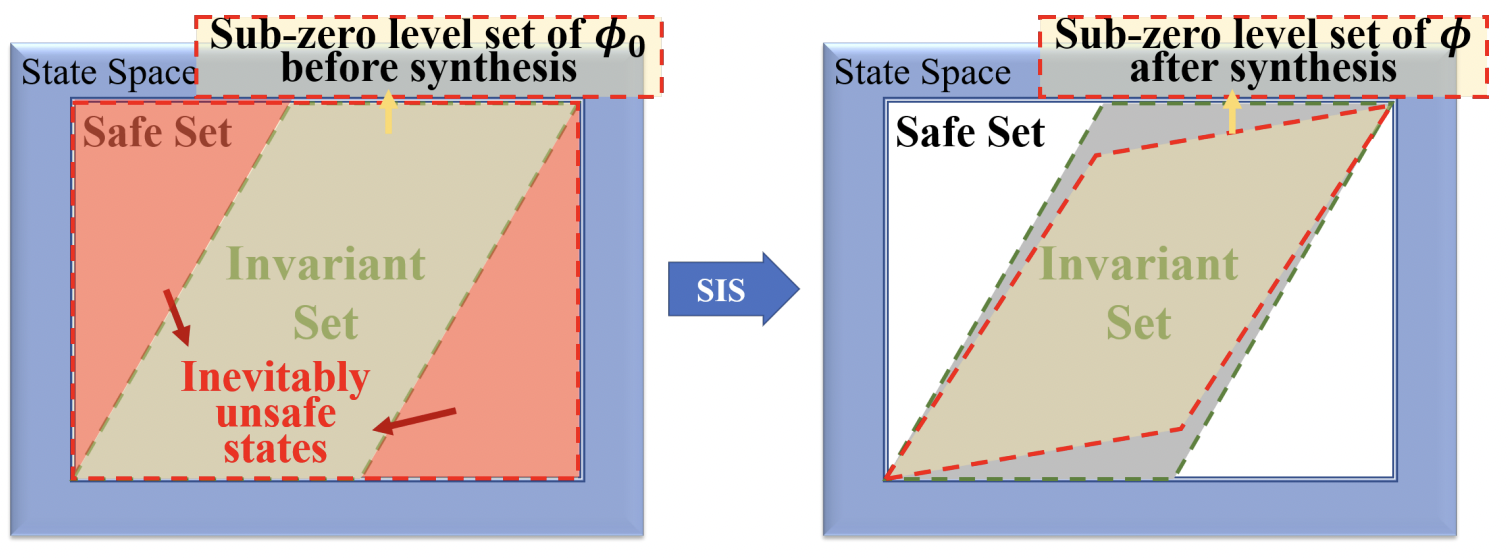

Joint Synthesis of Safety Certificate and Safe Control Policy using Constrained Reinforcement Learning

Changliu Liu, Shengbo Eben Li, Sifa Zheng, Jianyu Chen Annual Conference on Learning for Dynamics and Control (L4DC), 2022 (Best Paper Award Finalists) We jointly learn a safe control policy and a safety certificate. |

|

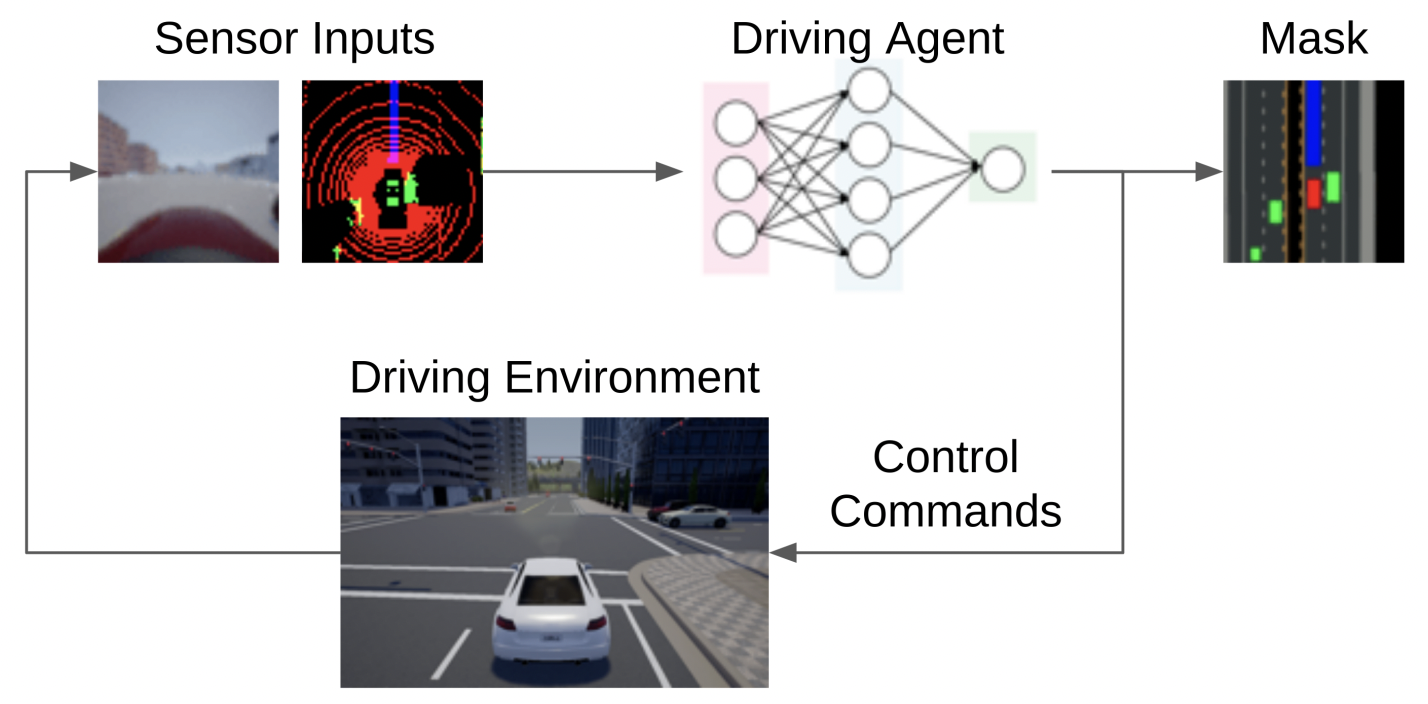

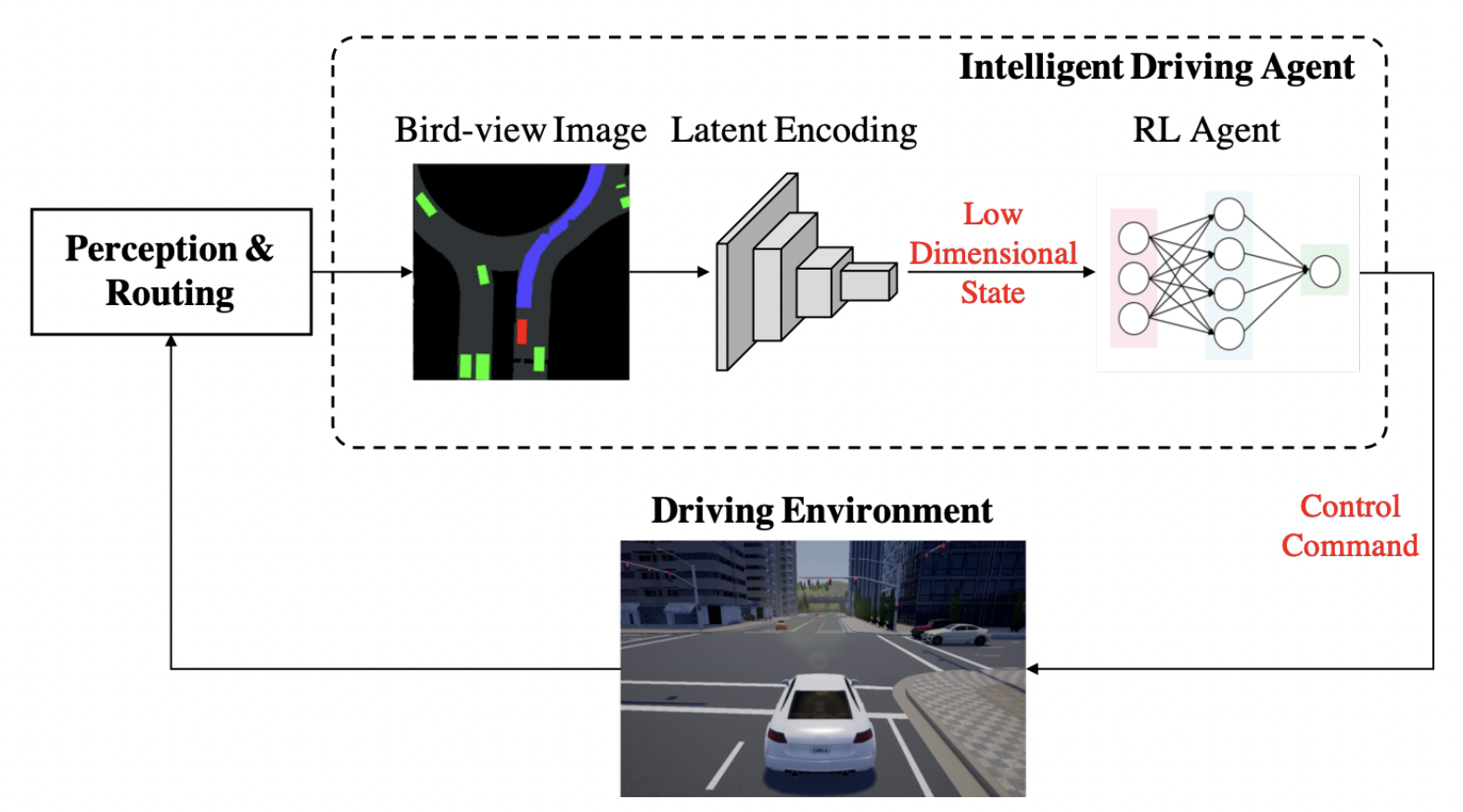

Interpretable End-to-End Urban Autonomous Driving with Latent Deep Reinforcement Learning

Jianyu Chen, Shengbo Eben Li, Masayoshi Tomizuka IEEE Transactions on Intelligent Transportation Systems (T-ITS), 2021 An interpretable latent deep RL framework for end-to-end urban driving. |

|

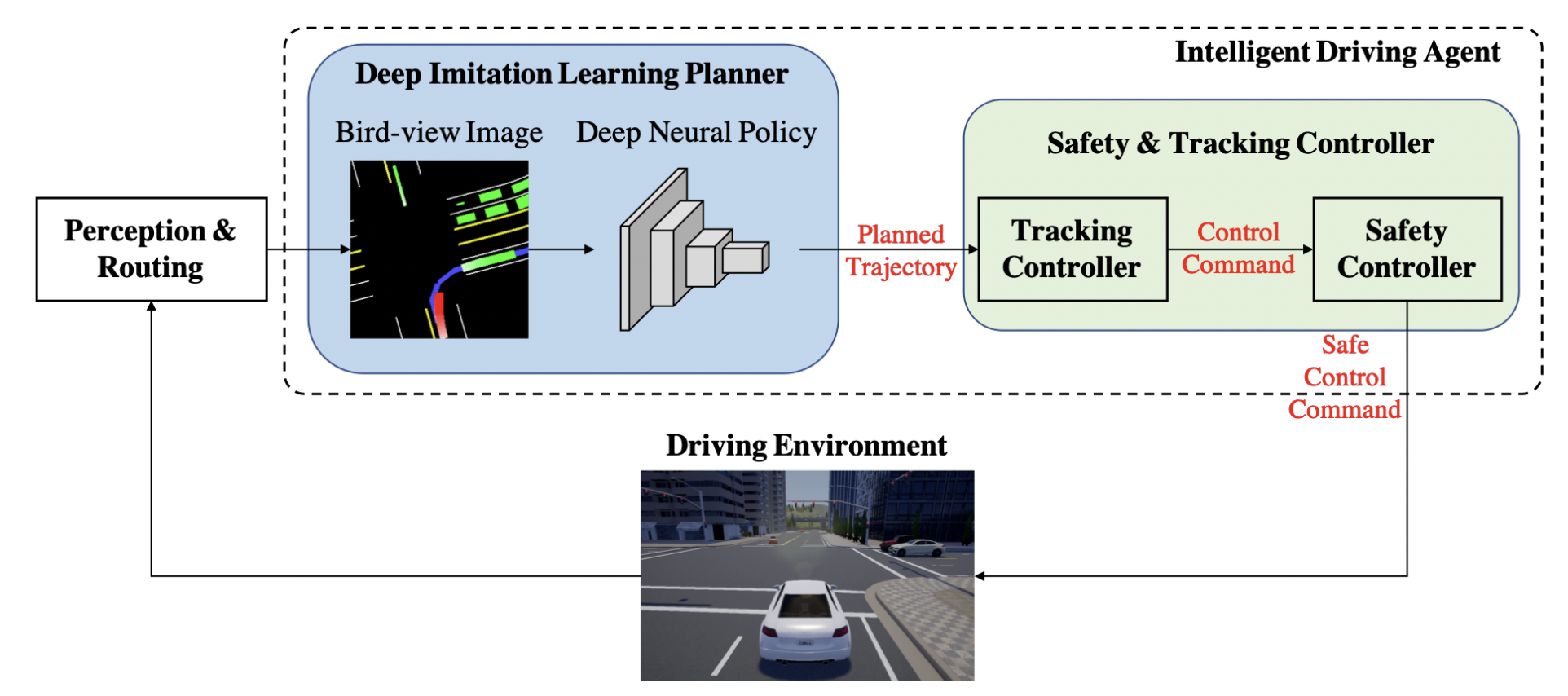

Deep Imitation Learning for Autonomous Driving in Generic Urban Scenarios with Enhanced Safety

Jianyu Chen, Bodi Yuan, Masayoshi Tomizuka IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2019 A data-efficient deep imitation learning framework for safe urban driving. |

|

Model-free Deep Reinforcement Learning for Urban Autonomous Driving

Jianyu Chen, Bodi Yuan, Masayoshi Tomizuka IEEE Intelligent Transportation Systems Conference (ITSC), 2019 A model-free deep RL method for urban autonomous driving. |

|

Autonomous Driving Motion Planning With Constrained Iterative LQR

Jianyu Chen, Wei Zhan, Masayoshi Tomizuka IEEE Transactions on Intelligent Vehicles (T-IV), 2019 We develop constrained iterative LQR for urban autonomous driving. |

|

Source code from Jon Barron. |